Geocode your addresses for free with Python and Google

For a recent project, I ported the “batch geocoding in R” script over to Python. The script allows geocoding of large numbers of string addresses to latitude and longitude values using the Google Maps Geocoding API. The Google Geocoding API is one of the most accurate geocoding APIs out there at the moment.

The script encodes addresses up to the daily geocoding limit each day, and then waits until Google refills your allowance before continuing. You can leave the script running on a remote server (I use Digital Ocean, where you can get a free $10 server with my referral link), and over the course of a week, encode nearly 20,000 addresses.

For Ireland, the Google Geocoder is also a sneaky way to get a large list of Eircode codes for string addresses that you may have. Google integrated Eircode information integrated with their mapping data in Ireland in September 2016.

Jump straight to the script here.

Geocoding API Limits

There are a few options with respect to Google and your API depending if you want results fast and are willing to pay, or if you are in no rush, and want to geocode for free:

- With no API key, the script will work at a slow rate of approx 1 request per second if you have no API key, up to the free limit of 2,500 geocoded addresses per day.

- Get a free API key to up the rate to 50 requests per second, but still limited to 2,500 per day. API keys are easily generated at the Google Developers API Console. You’ll need to get a “Google Maps Geocoding API”, find this, press enable, and then look under “credentials”.

- Associate a payment method or billing account with Google and your API key, and have limitless fast geocoding results at a rate of $0.50 per 1000 additional addresses beyond the free 2,500 per day.

Python Geocoding Script

The script uses Python 3, and can be downloaded with and some demonstration data on Github at “python batch geocoding” project. There’s a requirements.txt file that will allow you to construct a virtualenv around the script, and you can run it indefinitely over ssh on a remote server using the “screen” command (“Screen” allows you to run terminal commands while logged out of an ssh session – really useful if you use cloud servers).

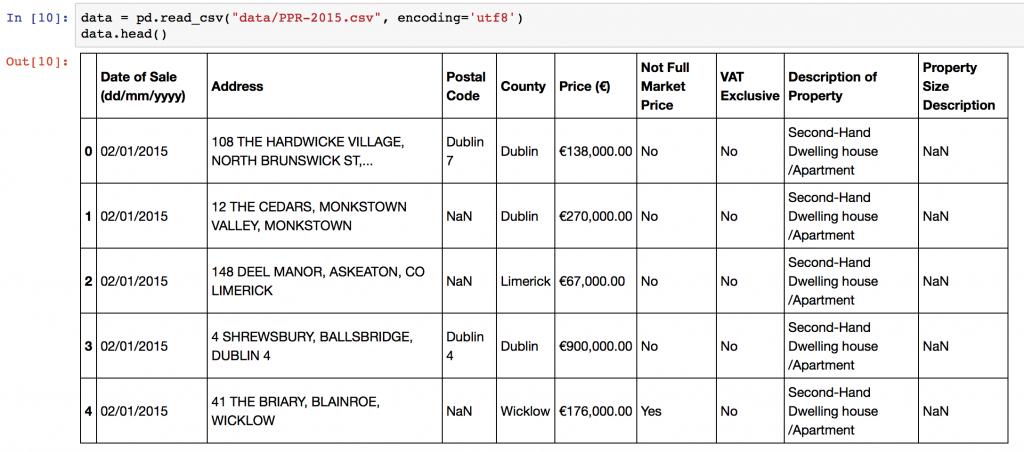

Input Data

The script expects an input CSV file with a column that contains addresses. The default column name is “Address”, but you can specify different names in the configuration section of the script. You can create CSV files from Excel using using “Save As”->CSV. The sample data in the repository is the 2015 Property Price Register data for Ireland. Some additional preprocessing on addresses is performed to improve accuracy, adding County and Country level information. Remove or change these lines in the script as necessary!

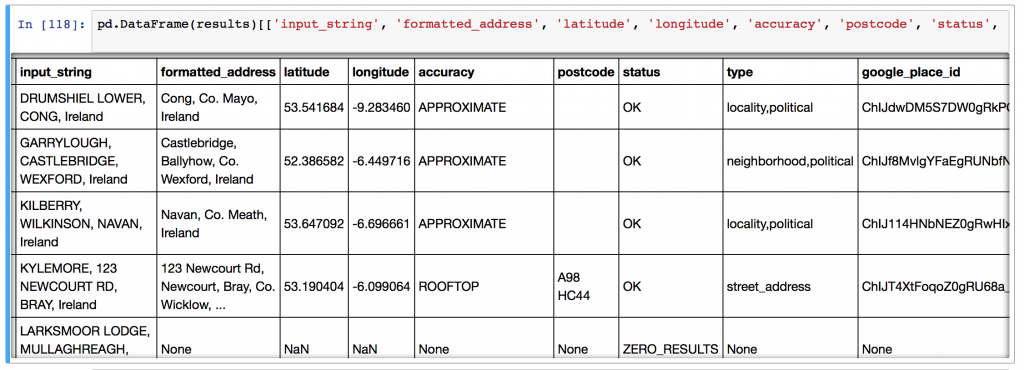

Output Data

The script will take each address and geocode it using the Google APIs, returning:

- the matching latitude and longitude,

- the cleaned and formatted address from Google,

- postcode of the matched address / (eircode in Ireland)

- accuracy of the match,

- the “type” of the location – “street, neighbourhood, locality”

- google place ID,

- the number of results returned,

- the entire JSON response (see example below) from Google can be requested if there’s additional information that you’d like to parse yourself. Change this in the configuration section of the script.

Script Setup

To setup the script, optionally insert your API key, your input file name, input column name, and your output file name, then simply run the code with “python3 python_batch_geocode.py” and come back in (<total addresses>/2500) days! Each time the script hits the geocoding limit, it backs off for 30 minutes before trying again with Google.

Python Code Function

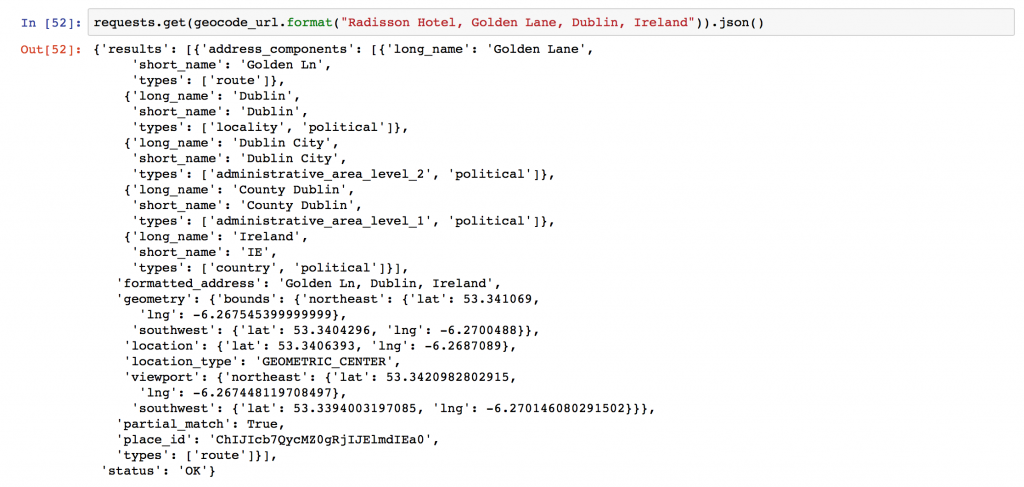

The script functionality is simple: there’s a central function “get_google_result()” that actually requests data from the Google API using the Python requests library, and then a wrapper around that starting at line 133 to handle data backup and geocoding query limits.

Reverse Geocoding

Reverse geocoding is the process of going from a set of GPS co-ordinates, and working out what the text address is. Google also provides an API for reverse geocoding, and the above script, with some edits, can be used with that API.

One of the blog’s readers, Joseph, has kindly put together this Github snippet with the adjustments!

Improving Geocoding Accuracy

There’s a number of tips and tricks to improve the accuracy of your geocoding efforts.

- Append additional contextual information if you have it. For instance, in the example here, I appended “, Ireland” to the address since I knew all of my addresses were Irish locations. I could have also done some work with the “County” field in my input data.

- Simple text processing before upload can improve accuracy. Replacing st. with Street, removing unusual characters, replacing brdg with bridge, sq with Square, etc., all leaves Google with less to guess and can help.

- Try and ensure your addresses are as well formed as possible, with commas in the right places separating “lines” of the address.

- You can parse and repair your address strings with specialised address parsing libraries in python – have a look at postal-address, us-address (for US addresses), and pyaddress which might help out.

That is very cool! Well done.

I came across this post when trying to do what seems to be exactly the same thing, reverse geocoding the crap property price register CSV. It might be a long shot but do you happen to have the list already reverse geocoded (with bad items removed?) – I would be very grateful for it!

[…] http://www.shanelynn.ie/batch-geocoding-in-python-with-google-geocoding-api/ […]

Awesome code, when i try to replicate your code i always get an NameError that geocode_result is not define at line 149 in ur example

Hi Mike, Did you figured out the problem? still have the same issue

Hi Mike, Good spot. This can happen in the code if there’s an exception thrown by the get_google_results function. Try adding ‘geocode_reuslt = {“status”: “starting”}’ at line 139.

Shane, great job. Nice, clean code. Works like a charm!. Congrats.

Hey Julio. Thanks! Glad you found it useful and hope it saved you some geocoding time!

Hi, excellent script! I couldn´t run the code on my machine. Could you provide instructions to run this code locally or on a remote server (Digital Ocean)? Thanks so much

Hi, excellent script, what changes would I need to make if I wanted to keep some of the existing columns from the input csv?

Cheers

Hi Matthew, I’m glad you found it useful. I think if you’d like, you would be better off allowing the script to run as is. Then load both datasets into a workspace afterwards and you can merge on the old column. Both output and input files should have the same number of rows so the existing column will match up nicely.

Hi, very good work on the script, perfectly explained.

I have a quick question, is there a way of including the original columns from the input file into the output file ?

As if today, you only catch the Address column and output it as “input_string”. But i would also like to output one extra field that i have in my input file.

Thank you for your help

Hi John. Yes if you want to. Have a look at my answer to Matthew for a hack for this. Otherwise, change the section where I created the “addresses” list to extract multiple columns, and use the to_dict() function of Pandas to create a list of dictionaries. Then in the main loop, add your new column to the geocode_result dictionary before its added to the results list!

Would you be able to go into more detail on how to accomplish this? I’ve managed to alter the script to call a stored procedure from an SQL dbase and then load that into the pandas dataframe. However, I need to maintain a unique ID from the origin data in the output table. I’d be happy to pay you for your time.

Thanks, that did the trick.

Hi, excelent work. What happens if we kill the script before completion ? does it still generate a “temporary” csv file ? thank you

Hi Joe, for safety sake, in case you lose a lot of work, the script saves the work in progress to a temporary csv file after every 500 geocoded addresses. I learned that lesson the hard way.

This works great for addresses in english, but it gives errors when encountering letters with french or spanish accents. How might we adjust it to not error out, in those instances?

Hi Lawrence – great that you found it useful. Which part of the script gives you issues with the non-standard characters? Is it reading in the file, or the upload to Google? What are the errors that you’re finding?

UnicodeEncodeError: ‘ascii’ codec can’t encode character u’\xa0′ in position 20: ordinal not in range(128)

Also your suggestion to add encoding=’utf-8′ to to_csv did seem to solve the problem.

It appears to have trouble writing the records, that have foreign character with accents, back to file and it aborts. When I look at the last record written back the very next record on my input file would require the foreign characters, therefor it fails.

Ok it might be a character encodings issue on the to_csv() calls. Try adding the parameter encoding=’utf-8′ to the to_csv() funciton calls. This might help, especially if you’re using Python2.

So line 168 would become:

pd.DataFrame(results).to_csv(“{}_bak”.format(output_filename), encoding=’utf-8′)

You’ll need to edit line 173 too.

Thanks, I will try those suggestions.

Again a nice post! Could almost use the script as-is. Wrapped it to use with Flask and added some caching to limit the API-calls. The only thing missing is multiple results if you request a non-unique locality like “Wageningen” (Netherlands + Suriname) or “Elst, Netherlands”. The Google Geocode API returns only one entry. I ques I should re-write the stuff a bit using the Google Places API instead of Google Geocode API. Thank again for a rocket start!

perfect! thanks!

Hey Shane,

Amazing code! I am entering the addresses as place names with their cities and countries but I want one of the output columns as the Place’s name itself. How can I get that as an output from Google API?

For example:

One of the input addresses is

Theoretical Physics Institute,University of Minnesota,,,Theoretical Physics Institute,University of Minnesota

I want the output as University of Minnesota in one of the columns, as in the cleaned result. Is there any way I can achieve this?

It would be great if you could help me.

Thanks!

Hi Shane – Thanks for this.

Could your code be modified to produce reverse geocode results?

I’ve figured it out. Minor modifications required, largely to how the data is presented to the program.

Thanks again.

Hi Joseph, very nice – if you have a chance to share the code on Github – I can provide a link here. A few people have asked about reverse Geocoding.

This should do: https://github.com/jdeferio/Reverse_Geocode

Apologies for the formatting on GitHub, I’ve never really used it before.

Cool! No problem – I’ve updated the text now to provide a link to your solution. Thanks Joseph!

Hey

Although this itself is really cool, can you help me out with the following.

Lets say I have a list of 1000 restaurants that I need to add on my website. I have the names and nothing else (I have the address but they aren’t formatted)

I wanted to follow this procedure:

1) Find place ID (using https://developers.google.com/maps/documentation/javascript/examples/places-placeid-finder)

2) Put Name and Place ID in a csv file

3) Run a similar script like yours to automatically populate the address and lat/long into an output file.

4) Fetch other Google places details from the API, like ratings, no of reviews, opening hours etc (obviously only the fields that are possible to retrieve)

Can you help me out with step 3 and 4?

THanks for this awesome code!

I’m getting the error : ConnectionError: Problem with test results from Google Geocode – check your API key and internet connection.

So i tried to insert in the code : geocode_result = {‘status’: ‘starting’} … still having the same error.. What’s wrong here?

# Ensure, before we start, that the API key is ok/valid, and internet access is ok

test_result = get_google_results(“London, England”, API_KEY, RETURN_FULL_RESULTS)

if (test_result[‘status’] != ‘OK’) or (test_result[‘formatted_address’] != ‘London, UK’):

logger.warning(“There was an error when testing the Google Geocoder.”)

raise ConnectionError(‘Problem with test results from Google Geocode – check your API key and internet connection.’)

geocode_result = {‘status’: ‘starting’}

# Create a list to hold results

results = []

# Go through each address in turn

for address in addresses:

# While the address geocoding is not finished:

geocoded = False

Hi, thanks for sharing. So I have tried but I do not manage to keep the original information in the “Output Table”. In your example, I would like to have the data on the data of sale, prices, VAT, etc. But after running the code they are lost and I only get the coordinates and the formatted address. I would appreciate your thoughts.

I am using this code but i get the following error:

Missing Address column in input data

Although there is an address column in the data i am working on!

Hi Karim – make sure that you have changed the “address_column_name” to the column name you are using, and remember that this will be case sensitive.

Hello,

I am using this code but first time when it hits OVER_QUERY_LIMIT , it keeps giving same error even after 60 minutes.

Please help

I am using this code but once the code hits OVER_QUERY_LIMIT, it keep giving this error even after 60 minutes. Please help to resolve this issue

Hi Mrunali – you have to have an API key set on the code for this to work, there has been recent changes to the Google API that require this.

Hi Shane. Firstly, thank you for your code. I’m trying to use it for a college project.

I have an API key set and it quickly decode the first 2,500 addresses. But then it stops and never restarts, even if I leave it running for days(keeps repeating the Over Query Limit). I have talked to google and although they reply they seem unable to help. I wonder if you know what the problem might be?

I think it is that the credit never seems to be accessible or quotas are incorrectly set up or something.

If I manually stop and restart the script it will again decode the first 2,500 addresses. (I have only ever manually restarted the next day).

Thanks!!

Thanks for the wonderful code. I tried it previously and it worked but now when retrying getting following error

There was an error when testing the Google Geocoder.

Traceback (most recent call last):

File “welcomeTrustLoc.py”, line 130, in

raise ConnectionError(‘Problem with test results from Google Geocode – check your API key and internet connection.’)

NameError: name ‘ConnectionError’ is not defined

Looking forward for your help

Thanks

VERY helpful! Thanks A LOT! You are amazing.

Hi there, This is a great piece of scripting.

I’m trying to get it working for myself but I’m getting hit with the following error

“UnicodeEncodeError: ‘ascii’ codec can’t encode character u’\xed’ in position 15: ordinal not in range(128)”

I’m using a straight download from the PPR website and saving my selected data as CSV.

Any ideas on how to solve this issue?

Thanks in advance,

Martin

Is there a way to adapt this code to utilize the 50 requests per second? I currently tested the code to run and have found that it does about 100 addresses a minute, just wondering which techniques i could use to maximize the speed.

Amazing job! I have a question. Do you have an idea or solution if I woul like desired to do inversely, that means from the longitude and latitude obtain the name or from postal address of the point.

Currently I work with a dataset of Taxis from New York City, and I aim to generate another column whith the address.

If you have another solution to this specific problem it would appreciate it if you would share it with me. Thank’s!

Just like the comment above, I’m interested in speeding up the execution of this script. Additionally, is there a way to adjust the script so that it parses the entire contents of the json feed into different columns?

The code runs but I get the message “Request Denied” so the only results dumped into my input file is the addresses.

Worked perfectly and saved me a lot of time. Thank you very much!

Hello, I just used this and it’s awesome! i only did a few tweaks and it gave me the info i needed how i needed it. THANK YOU VERY MUCH!